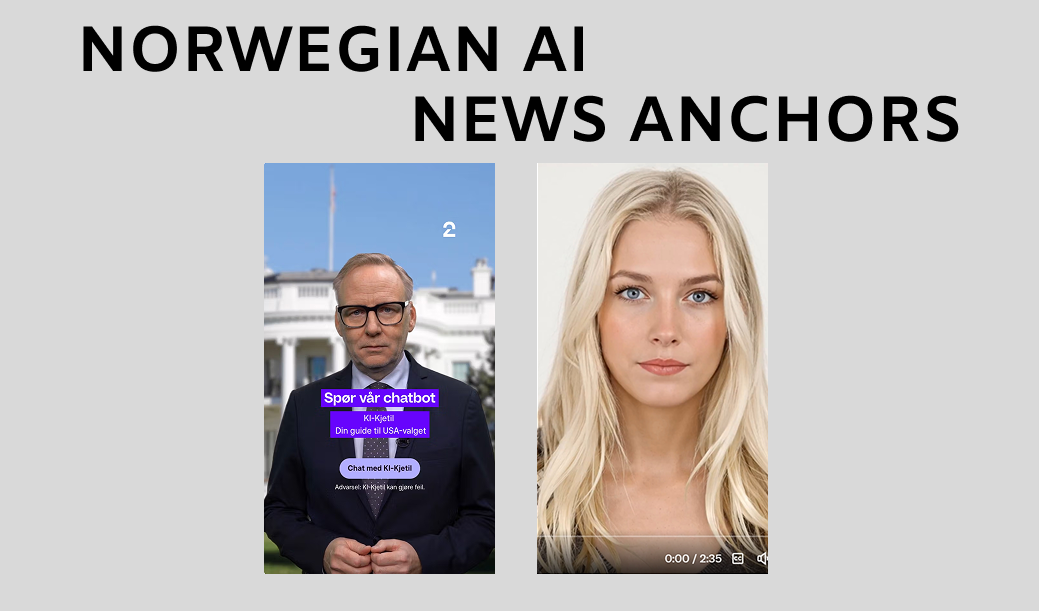

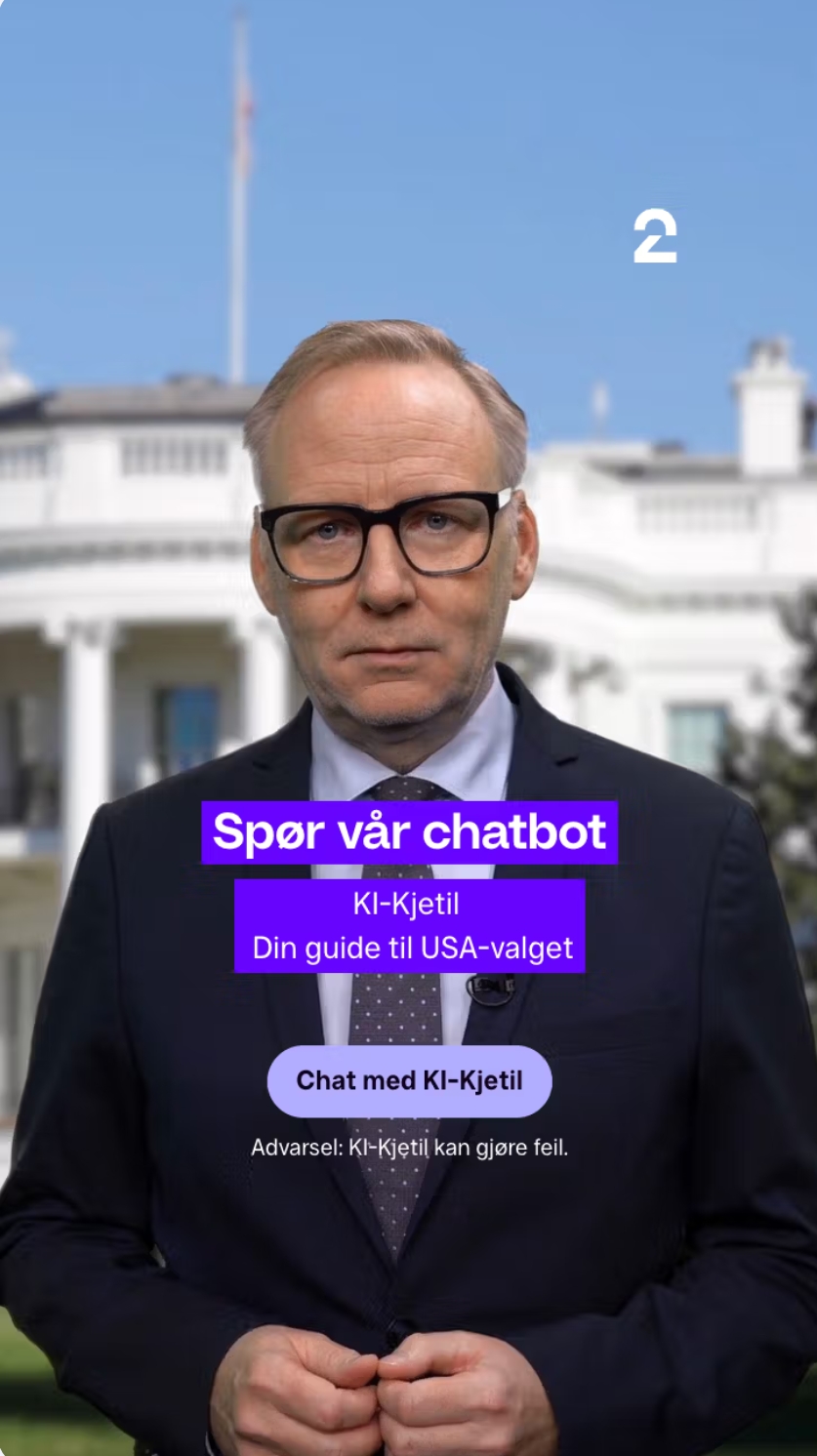

Imagine being able to ask a news anchor any question about the U.S. election and getting a clear, fact-based answer. That is exactly what TV2, one of our industry partners, achieved with their experiment KI-Kjetil. This AI-powered avatar was created to help Norwegians better understand the complexities of the American election system. Meanwhile, another AI news anchor, Ingrid, has emerged, raising concerns about transparency and trust. These two examples highlight both the opportunities and challenges of AI in journalism.

KI-Kjetil: A Transparent Approach to AI Journalism

TV2’s KI-Kjetil is a digital replica of journalist Kjetil H. Dale. Using advanced tools like ElevenLabs for voice synthesis and Heygen for facial animation, the team created an avatar that looks and sounds like the real Kjetil. The aim was to make the U.S. election process easier to understand for Norwegian audiences.

What sets KI-Kjetil apart is its transparency. Kjetil H. Dale was directly involved in the project, approving how his likeness would be used. The AI was designed with strict limitations: it could only provide fact-based answers, avoiding speculation or opinions. TV2’s editorial team closely monitored the project, which was available only until the U.S. election results were finalised.

The team working on the project monitored the chat logs to see if the AI gave any wrong answers. However, they didn’t link the chats to any user profiles or personal information. To make sure the experiment followed journalistic rules, they used the same ethical standards that apply to TV2’s human journalists.

Project leader Chris Ronald Hermansen and MediaFutures industry leader Lubos Steskal introduced KI-Kjetil at MediaFutures’ Annual Meeting 2024. Their talk led to many questions from the audience and showed that people are just starting to react to this kind of AI experiment in journalism.

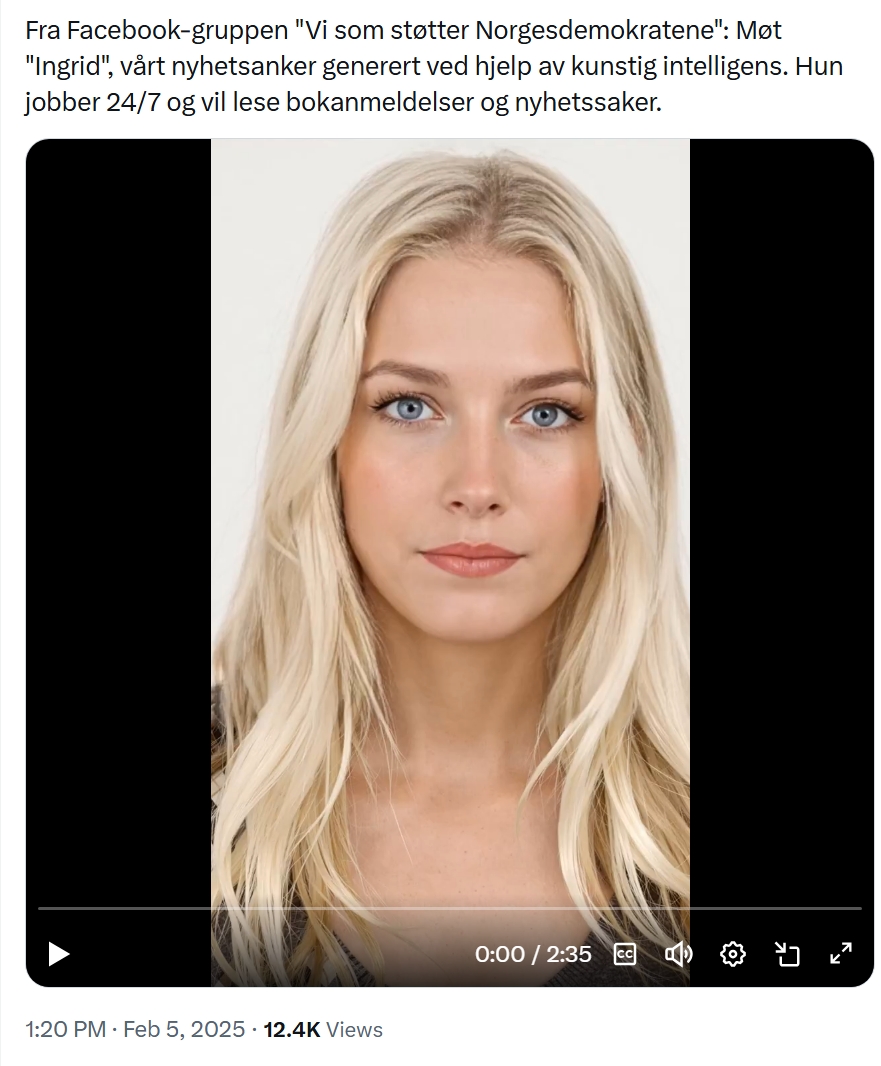

Then Came Ingrid the Newsbot

But not every AI anchor is built with the same level of care.

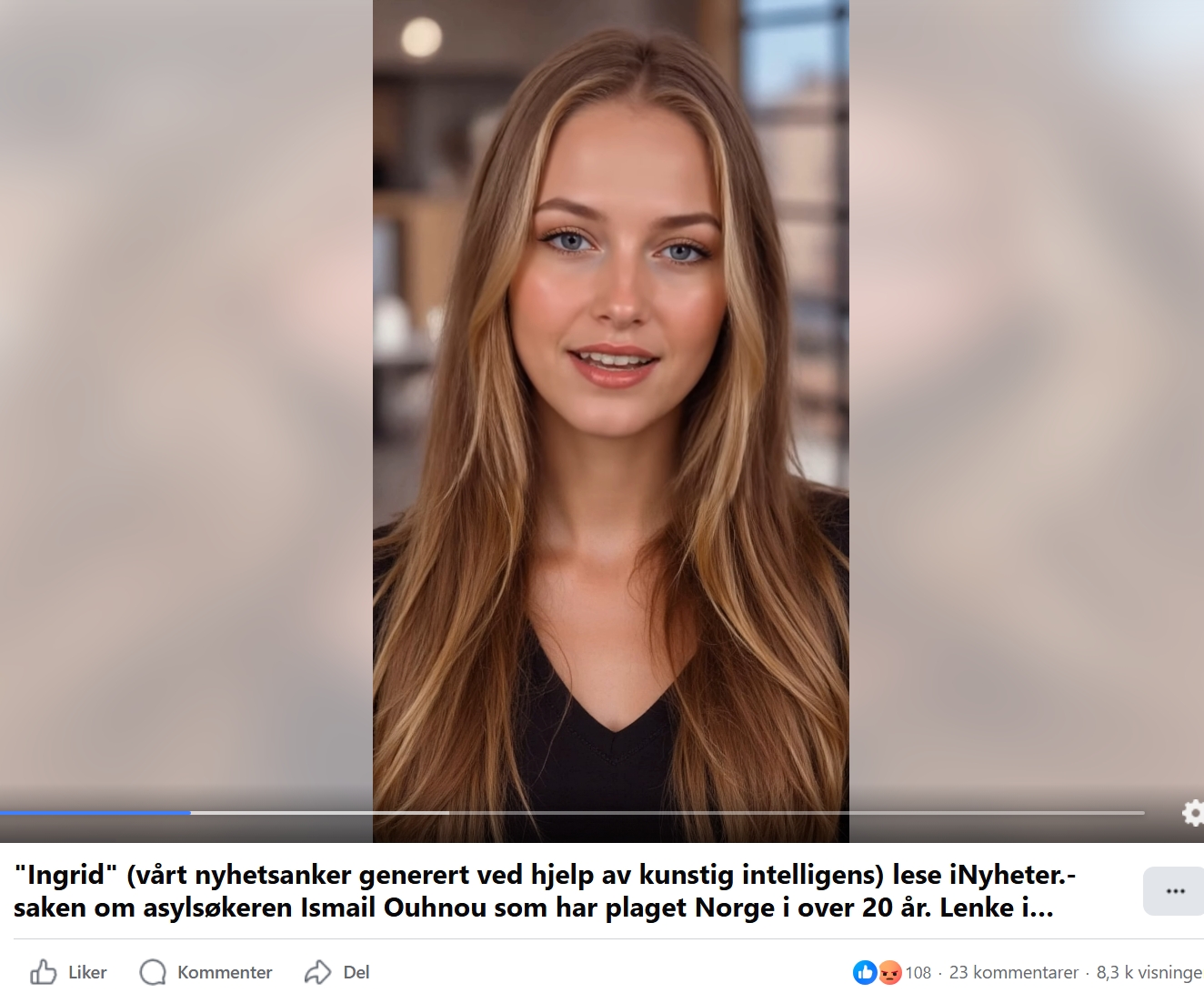

Some months later, users on social media began encountering a new digital news anchor named Ingrid. She, too, reads the news and appears as a lifelike avatar. However, unlike KI-Kjetil, Ingrid’s origins are unclear. Her “publisher” is not a verified newsroom, and there is little to no public information about who controls her content or why.

According to our industry partner Faktisk.no, Ingrid delivers a customised news feed that pulls headlines from actual media sources. However, it is unclear whether those sources have consented to the use of their material. There are no visible editorial standards, ethical guidelines, or contact information. The result is a newsbot that looks professional but operates in a black box.

Can We Trust AI in Journalism?

TV2’s experiment with KI-Kjetil was driven by a desire to make news more engaging and accessible. By using AI, they aimed to help people of all knowledge levels understand complex topics like the U.S. election. Their approach shows that AI can be a valuable tool in journalism when used responsibly. KI-Kjetil was carefully programmed to deliver fact-based answers, with human editors ensuring its content adhered to journalistic standards. Strict limitations prevented it from speculating or offering opinions, maintaining its focus on objectivity.

However, as an experimental project, KI-Kjetil was not without risks. TV2 acknowledges that mistakes were possible, which is why the newsroom actively monitored its performance and made corrections when necessary. The project was time-limited, ending with the conclusion of the U.S. election, ensuring it remained a controlled trial.

In contrast, avatars like Ingrid demonstrate the risks of deploying AI without ethical oversight. With no transparency about its creators or editorial standards, Ingrid raises concerns about misinformation and manipulation, highlighting the importance of accountability in AI-driven journalism.

Will AI Replace Journalists?

TV2 is clear: AI cannot replace journalists. While tools like KI-Kjetil can enhance accessibility and personalise news delivery, they lack the critical thinking, ethical judgement, and decision-making that human journalists provide. AI can assist, but it cannot replicate the nuanced oversight required to maintain trust in media.

The contrasting cases of KI-Kjetil and Ingrid underscore the dual potential of AI in journalism. KI-Kjetil exemplifies how AI can support ethical reporting, while Ingrid reveals the dangers of unchecked technology. These examples highlight the need for transparency and rigorous standards as AI continues to shape the future of news.

KI-Kjetil offers a glimpse into what AI can achieve, but it also raises important questions: Should AI present the news, and how can we ensure it remains trustworthy? As the media industry explores these possibilities, the balance between innovation and ethics will be critical.